Latest Article

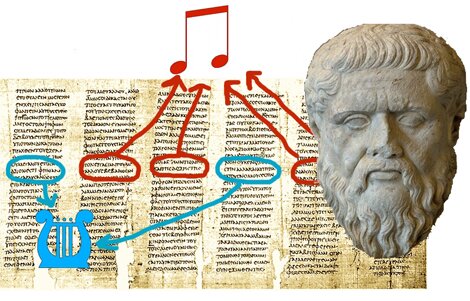

Leo Strauss proved wrong about Plato

Jay Kennedy on how surprising new research shows that Leo Strauss got Plato wrong.

Featured Article

Stephen Stich and Wesley Buckwalter present an experimental philosophy parable

Announcements

- In the latest microphilosophy podcast, tpm's editor-in-chief Julian Baggini talks to John Gray about some of the ideas that emerge from his latest book, . Download from this link or iTunes. The podcast was recorded at the Bristol Festival of Ideas in May, at the Arnolfini. #

- Can artificial intelligence teach us about what it means to be human? That is the fascinating question behind Brian Christian's recent book, The Most Human Human. In his latest microphilosophy podcast, Julian Baggini is in conversation with Christian. More information here or download from iTunes. #

- tpm's editor-in-chief Julian Baggini has started a new podcast series, microphilosophy, which replaces his popular Philosophy Monthly. Each edition will be an interview, talk, discussion or feature, no longer than half an hour but usually much shorter. This first is an interview with the philosopher and theologian Richard Swinburne, conducted for Julian's new book, The Ego Trick. More podcasts relating to the book will follow over coming weeks. You can download or listen to the podcast here and at iTunes. #

- Professor Peter Adamson of Kings College London has started a new series of podcasts covering the history of philosophy. There's full information here and it's also available through iTunes. #

- We have just finishing a major rebuilding of our archive, which means for the first time, every issue since we started in 1997 up to the current issue is now available, as it originally appeared, completely free to subscribers. The archive is powered by ExactEditions and you can browse it here. There is also an iPhone app, Exactly, which you can use to read the magazines on your iPhone or iPad. If you’re a subscriber, and you don’t have a username and password, then go here to find out how to access the archive. #

Welcome to TPM: The Philosophers’ Magazine

Updated every Tuesday and Friday, tpm’s website features articles from current and back issues of the magazine, as well as some online exclusives.

Recent Articles

The Skeptic

August 30, 2011

Author: Wendy M Grossman

Hacker ethics

August 23, 2011

Author: Andrew Zimmerman Jones

Sections

Subscribe to TPM